Table of Contents

SAN Migrations

At The PARSEC Group we are called upon often to perform SAN migrations. This consulting gig generally entails using operating system tools and occasionally some custom scripts to migrate from one storage device to another.

What is a SAN Migration

The usual scenario is that a customer may buy a SAN and use it for some number of years. After that time they want to upgrade to a new SAN to increase their speed and get away from escalating support costs. SAN vendors generally jack up the price after the third or fourth year the SAN has been in production. It seems they figure you are dependent enough on the system by that time that it'll be safe for them to increase the support cost. Their excuse is generally that they don't want to keep spare parts for older equipment. The truth is they know they can trap their customers between escalating support costs and the cost required to replace the SAN completely. Without a solid solution for migration, the customer is generally left between a rock and a hard place. They can pay almost as much as the SAN is worth just to keep what they have on support another year or they can buy a new SAN and pay even more and be forced to migrate the data by hook or by crook.

Another situation may be a customer who has been using internal disks, but wants to move the data onto a SAN. This situation isn't usually driven by cost. Rather, it's generated by a desire to upgrade their performance, manageability, and perhaps also to centralize storage management.

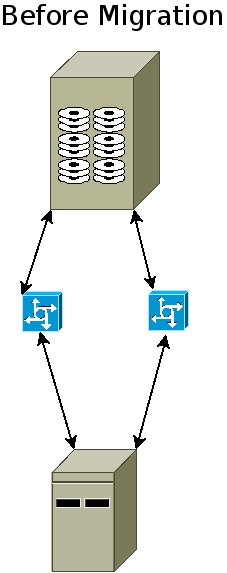

Check out this view of a single node's relationship to the SAN through it's SAN switch.

The diagram above shows a single node on a SAN connected through a couple of fiber switches (some call those “fabrics”). The initiator (the SAN client) will have two HBA ports on his SAN card. Most people begin their migrations by installing the new SAN and connecting it to their existing fiber switches or by cross-connecting a new set of switches into the old ones.

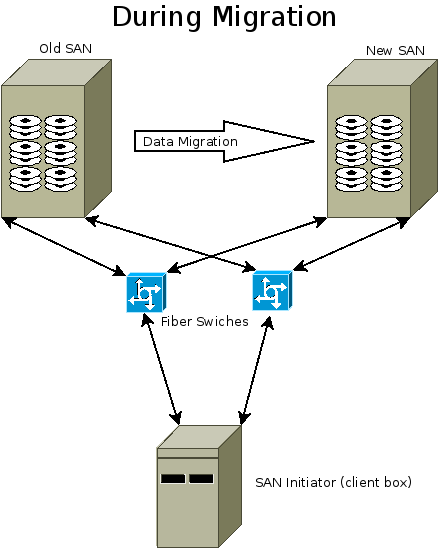

In this diagram you can see that one is going to preserve the switches. Sometimes this isn't possible because the client wants to upgrade the speed or media types on the switch. So, this is the case only about half the time.

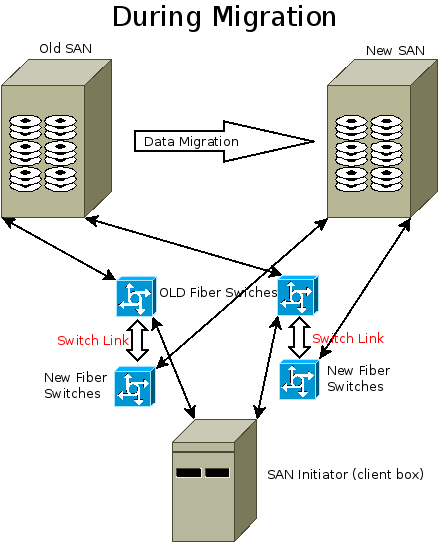

In the above diagram you can see a migration example where the client wants to move to a new SAN and they are also going to ditch their old switches. However, since they don't want to take downtime until the old SAN and old switches are completely migrated away, they will continue to let the host talk to both of the SANs with an ISL link between switches. Once they are done, the client will need to physically move from the old set of SAN switches to the new set. This is usually a very quick outage just long enough to change the cables.

How Does PARSEC Do Migrations?

We do not rely on fiber “appliances” to do our SAN migrations. We believe that to be a mostly inferior method since it requires something to permanently spoof the old SAN. It's like it's ghost is still around and you can never forget about it. There are a lot of other problems with SAN migration appliances, too. One of the biggest issues is that they usually charge you by volume (of your data migration or of your fiber host WWPNs). So, a big company who gets a SAN appliance for a major migration will pay a lot more.

The way we do our migrations is via our mastery of the host based tools in the operating system. We actually re-federate the storage completely from your old to new SANs. In order to this we use specific tools. Let me give you some detail for each OS we support.

- OpenVMS: we use the VMS feature known as “Volume Shadowing” in order to create mirrors of your old data onto your new SAN. When the mirroring completes, we break the relationship and you are off your old SAN forever. This usually requires zero downtime.

- AIX, Linux, HP-UX: We use the Logical Volume Manager (LVM) which comes with the operating system to migrate your logical volumes to new physical volumes by adding old LUNs to new LUNs in the volume group, then creating mirrors of each logical volume on the new SAN LUNs. This usually requires no downtime to migrate. We also have scripts and tools which enhance the process by giving you status, estimated time of completion, and managing multiple mirroring operations simultaneously where possible, speeding up the migration considerably.

- Solaris 2.6 - Solaris 9 We can use either Solstice Disk Suite (called Solstice Volume Manager in Solaris 9) or we can use Veritas Volume Manager to migrate your storage. Be aware that neither SDS/SVM or VxVM are setup on Solaris by default. So, if you are using straight disk devices your migration will be more manual and require more outages. With SDS or VxVM it can usually be done online with little or no downtime.

- Solaris 10 and above We use the ZFS filesystem to perform the migration in much the same way as we do for LVM. We add the new SAN storage to the ZFS storage pool, mirror onto the new storage, then break the mirror away from the old storage.

- Tru64 and Digital Unix We can use both AdvFS and Logical Storage Manager to migrate you from your old to new SAN. Be aware that Alpha systems cannot use anything faster than a 2Gbit HBA. So, you'll need to keep a switch around capable of that lower speed. You may also need to adjust your buffer credits on the new switch to compensate for that old infrastructure. However, the upside is that with both AdvFS and LSM we can do the migration with no downtime.

- IRIX We can use either CXFS or the XLV volume manager to migrate your storage on IRIX with no downtime. If you are using XFS or EFS filesystems directly on disk slices, we can still perform your migration but you should expect some outages as the old storage is deprecated.

What Have You Guys Migrated Before?

We have done migrations between all major storage vendors equipment. On the old/legacy side we have seen a lot of EMC Symmetrix, NetApp 7-series, and Hitachi USP-V SANs. As far as newer gear we've landed on, it's typically been EMC VMax, Hitachi F-Series, Pure Storage, Dell Compellent, NetApp Cluster-Mode gear, or HP 3PAR. Not all newer SANs will support some of the special features required by OpenVMS. So, check with your vendor first before dropping any money on new storage.

On the switch side of things we are very familiar with Brocade switches which is mostly what we see in the wild. We also have had multiple experiences with Cisco MDS, Juniper, and Qlogic. Every now and then we'll see old McData switches and have had to deal with their quirky behaviors.